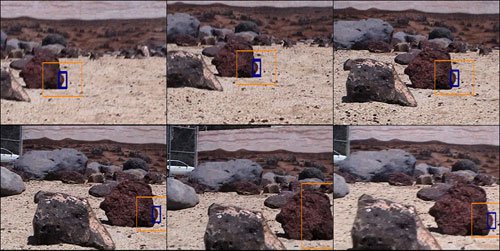

Image sequence (left to right, top to bottom) while tracking a rock edge during rover navigation (blue - target lock; orange - search window)

We will develop a new biologically inspired computing engine to perform real-time visual landmark tracking for autonomous navigation over planetary surfaces. The computing engine will have two main components: a self-organizing feature extractor and an adaptive pattern classifier. The classifier algorithm will be based on an adaptive Radial Basis Function (RBF) neural network architecture. RBF algorithms have advantages for pattern recognition, most notably including practical implementation in parallel hardware for real-time operation. An adaptive RBF computing engine would autonomously track landmark features in real-time video streams, even as the image changes due to self-movement of the vehicle. Such tracking would allow a vehicle to more precisely determine relative position, heading and velocity, and to home in on desired targets or waypoints. A successful adaptive RBF landmark tracker would therefore be general purpose and readily utilized for multiple NASA/JPL mission applications, including spacecraft and rover navigation.

The PI for this work is Tien-Hsin Chao of JPL.